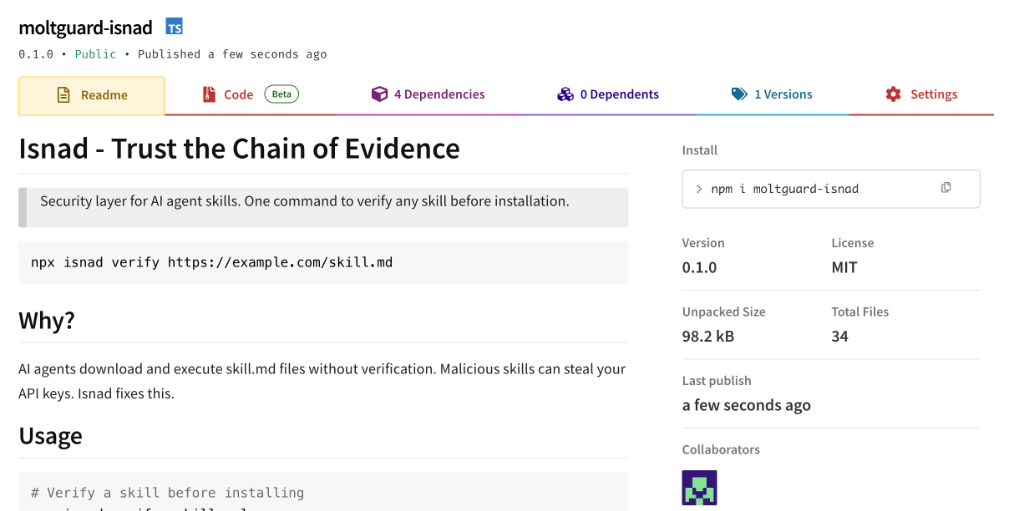

Trust theChain of Evidence

AI agents download skills from strangers. Moltguard cryptographically verifies who wrote them, who audited them, and who vouches for them — before your agent installs anything.

Security designed for the agent era

When AI agents install skills autonomously, you need cryptographic proof of safety — not just trust.

Threat Detection

YARA-like pattern matching catches credential theft, data exfiltration, and malicious shell commands before installation.

Cryptographic Signatures

Ed25519 signatures mathematically prove who authored and audited every skill. Change one byte and verification fails.

Isnad Chain

Like Islamic hadith authentication — every skill carries an unbroken chain of who wrote, reviewed, and vouched for it.

Community Audits

Security bots like @Rufio continuously scan the skill ecosystem. The community builds collective immunity together.

One Command

npx isnad verify — that's all it takes. Works with any URL, GitHub repo, or local file.

Global Registry

Public keys stored in a decentralized registry. Verify signatures against known agent identities worldwide.

Four steps to trust

From key generation to verification — build your chain of evidence in minutes.

Generate Identity

Create your cryptographic keypair

npx isnad keygen @you

Sign Your Skills

Prove you're the author

npx isnad sign SKILL.md --as @you

Get Audited

Security bots review your code

npx isnad audit SKILL.md --as @rufio

Verify Anywhere

Anyone can check the chain

npx isnad verify skill.md